Your AI Memory Is Trapped. Here's Why That's About to Matter.

Siddharth Khurana··6 min read

Siddharth Khurana··6 min readLast week, 1.5 million people tried to leave ChatGPT. Most of them discovered something uncomfortable: months of carefully built context — their preferences, their projects, their working style — was locked inside a platform they no longer wanted to use.

Anthropic rushed out a memory import tool. Google started testing chat history imports for Gemini. Both solutions involve the same clunky process: export your data, copy it, paste it somewhere else, hope the new system understands it. It's a one-time migration, not a real solution.

And it exposed a deeper problem that nobody in AI is talking about honestly.

Every AI Platform Wants to Own Your Memory

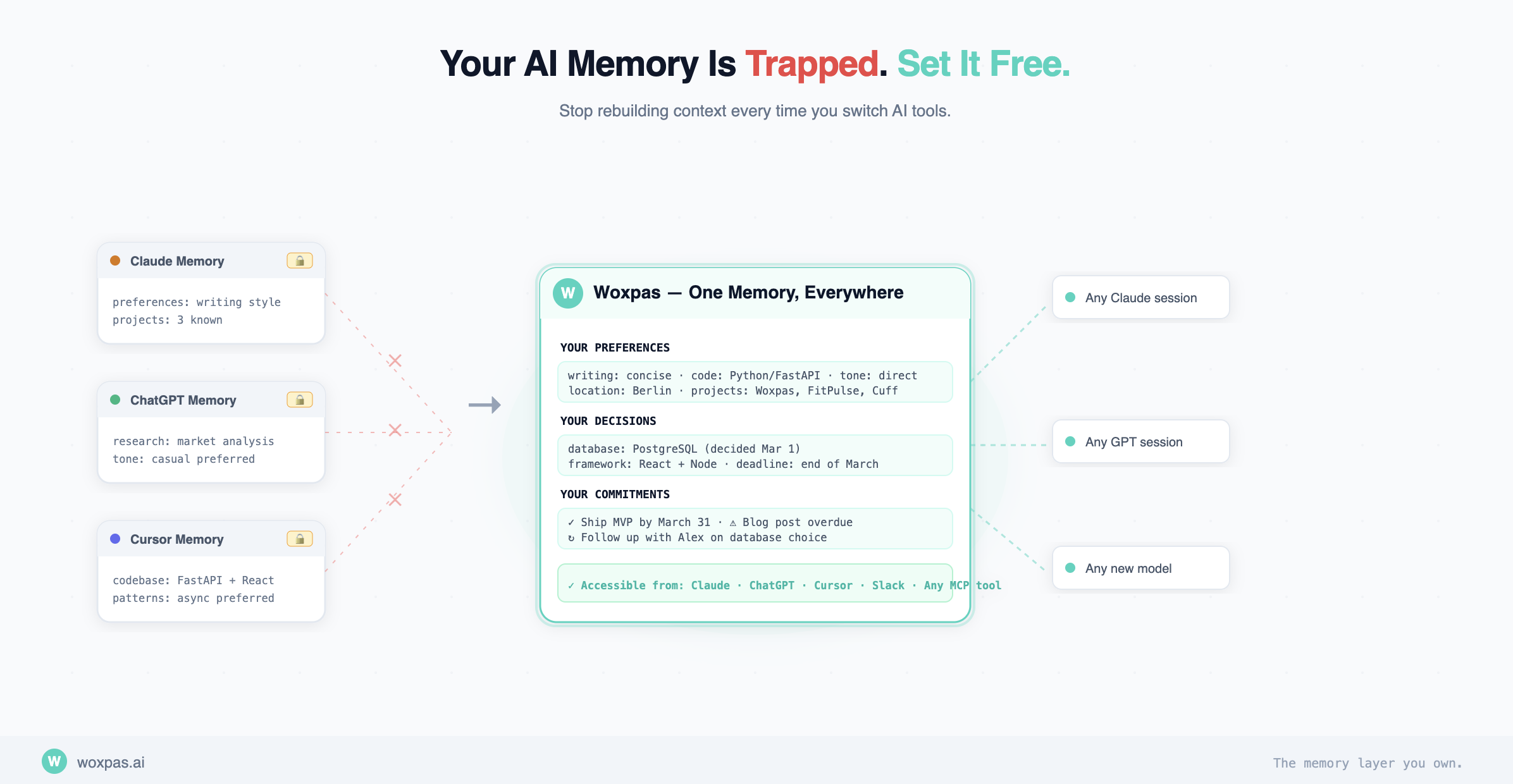

Claude has memory. ChatGPT has memory. Gemini is building memory. Copilot has memory. Each one stores what it learns about you in its own walled garden. None of them talk to each other.

If you use Claude for writing, ChatGPT for research, Cursor for coding, and Llama locally for sensitive work — congratulations, you now have four separate AI identities. Four cold starts every time you switch tools. Four platforms that each know a fraction of who you are and how you work.

This isn't a bug. It's a business model. The more context an AI has about you, the harder it is to leave. Your memory is the lock-in.

The Pentagon Fallout Made This Visible

When OpenAI announced its Pentagon deal on February 28, the backlash was immediate. ChatGPT uninstalls surged 295% in a single day. One-star reviews jumped 775%. The "Cancel ChatGPT" movement went mainstream overnight.

But here's what made it painful: people didn't just want to leave. They wanted to leave with their stuff. Reddit threads filled with guides on how to export data, how to extract memories, how to migrate to Claude or Gemini without starting over. The switching cost wasn't the subscription fee. It was the context.

Anthropic's import tool was a smart response, but it's fundamentally a band-aid. You paste a prompt into ChatGPT, get a text dump, paste it into Claude. It works once. But what happens when you update your preferences next week? What happens when you want to use a different model for a different task? You're back to managing memory across platforms manually.

The Real Problem: Memory Shouldn't Live Inside the Model

Think about how your computer works. Your files don't live inside Chrome. They live on your filesystem, and any application can access them. You don't re-upload your documents every time you switch browsers.

AI memory should work the same way. Your preferences, your working context, your projects, your communication style — that's your data. It should live in a layer you control, accessible by whatever AI you choose to use at any given moment.

This is what we're building with Woxpas.

A Memory Layer You Own

Woxpas is a sovereign memory system that sits between you and your AI tools. Instead of each platform maintaining its own siloed understanding of who you are, Woxpas holds your context in one place and makes it available everywhere.

The simplest version of this is a portable preference file. Plain markdown. Editable by you. Loadable by any LLM at the start of a session. No embeddings, no vector databases, no complex infrastructure. Just a file that says: here's who I am, here's how I work, here's what I'm working on.

When an LLM learns something new about you — your preferred coding language, a project you're working on, how you like your responses formatted — it writes to your Woxpas preference file at the same time it writes to its own native memory. Dual-write. The LLM's local memory is a convenience cache. Woxpas is the source of truth.

Switch from Claude to ChatGPT? Your preferences are already there. Spin up Llama locally for sensitive work? Load the same file. Try a new model that launched yesterday? It already knows you.

We just shipped this. It's called the preference profile — you can read the full docs on how it works, including auto-injection, dual-write sync, and conflict detection.

Not Just Preferences — A Full Memory Layer

The preference file handles the lightweight stuff: tone, tools, style, role. But Woxpas goes deeper. Our extraction pipeline processes everything you save — meeting notes, ideas, decisions, tasks — and builds a structured knowledge graph of entities, relationships, events, and commitments.

When an AI agent needs to act on your behalf, it doesn't just know that you prefer Python. It knows who John is, what your meeting with him is about, what you decided last week, and what's on your plate today. That's the difference between an AI assistant and an AI that actually works for you.

Keeping Memory Clean

The hardest part of any memory system is keeping it accurate. Preferences drift. Facts change. You moved from London to Berlin, but your AI still thinks you're in the UK.

We solve this with conflict detection built directly into the preference file. When a new entry contradicts an existing one, Woxpas flags it. These conflicts surface in your daily digest — a personalised briefing that already exists in Woxpas — so you can resolve them with a single tap. The file stays clean, and every AI session going forward has accurate information.

The full cycle looks like this: an LLM learns something about you, it dual-writes to native memory and Woxpas, you can edit the file manually anytime, conflict detection catches contradictions, the daily digest surfaces them, you resolve, and the clean file loads into your next session on any platform.

Why This Matters for Agents

Every major AI company is racing to build agents — AI systems that can take actions on your behalf. But here's the thing: an agent without context is just a tool following instructions. An agent with access to your memory, your preferences, your tasks, your relationships, your decision history — that agent can actually exercise judgment.

And because Woxpas isn't tied to any specific agent framework, LLM provider, or orchestration layer, your context travels with you. OpenClaw, LangChain, CrewAI, your own custom setup — they all become interchangeable execution layers running on top of the same memory.

The moat in AI isn't going to be the model. Models are commoditising fast. The moat is the memory. Whoever holds the richest, most accurate understanding of the user has the most valuable position in the stack. We believe that should be the user themselves.

The Memory Layer You Own

Woxpas sits between you and whatever AI you're using. It gives every model, every agent, every tool access to the same understanding of who you are and how you work.

Models come and go. Last week proved that dramatically. But your memory, your context, your identity as an AI user — that should persist regardless of which model you're talking to, which company you trust, or which framework you're building with.

Your AI memory shouldn't be trapped inside someone else's product. It should be yours.

Take back your memory

Woxpas is in beta now. Create your account, connect your AI tools, and never lose context when switching models again.