I Said Hello and Got My Whole Day Back

Siddharth Khurana··4 min read

Siddharth Khurana··4 min readI opened Claude and typed "Hello."

That's it. One word. No instructions, no follow-up prompts, no "give me a summary of my tasks." Just hello.

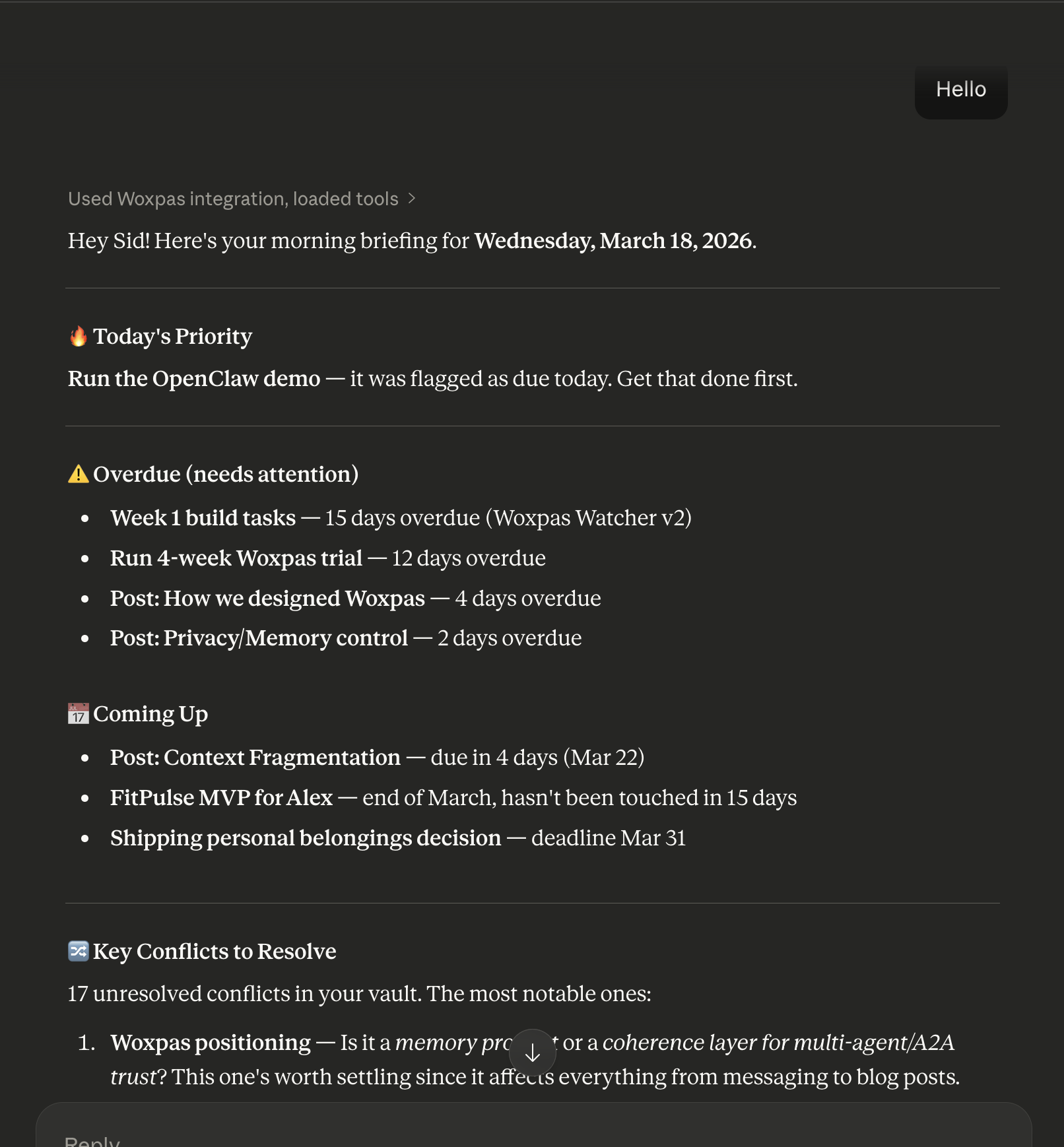

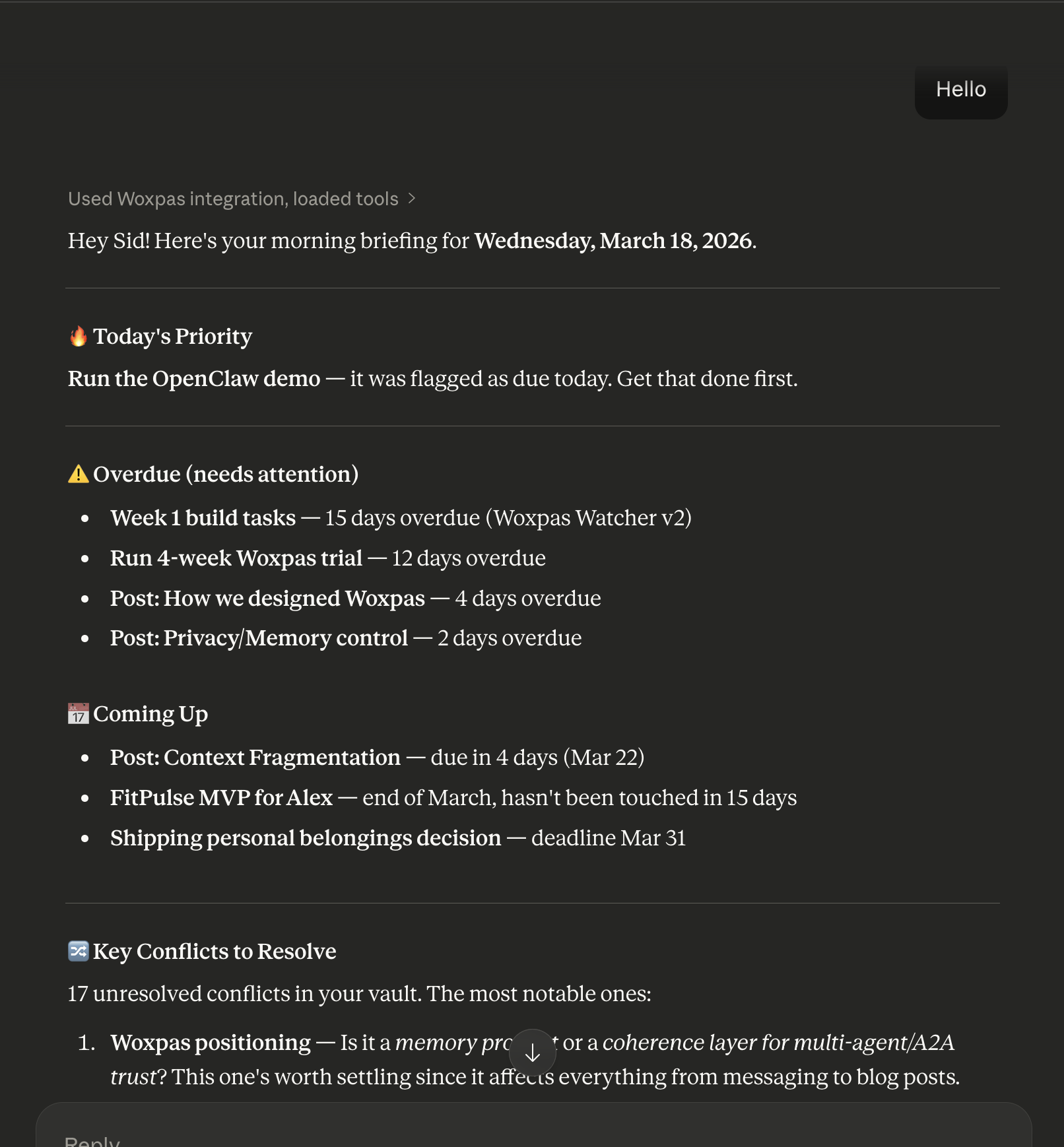

And Claude came back with my entire day. Today's priority — the OpenClaw demo I'd flagged as due. Four overdue items with exactly how many days late each one was. Three upcoming deadlines. Seventeen unresolved contradictions in my notes, with the top three surfaced and contextualized. And a suggested order for what to tackle first.

I didn't ask for any of it.

How Did We Get Here?

A bit of context: I've been stress-testing Woxpas by using it myself as I build it. Everything you see in that screenshot — the projects, deadlines, conflicts — is test data from weeks of real usage, not a curated demo. Some of it's real work, some of it's noise I'd never save as a normal user. That's the point. The system handles both.

Over those weeks, I've been dumping everything into Woxpas. Meeting notes, half-formed product ideas, session logs from coding with Claude Code, blog post drafts, personal deadlines, project decisions. Some of it messy. Most of it untagged. None of it organized.

That's the point. I don't want to organize my thoughts — I want to think, and have the organization happen behind me.

Woxpas is a memory layer that sits underneath every AI tool I use. When I save a thought in Claude, it's there when I open ChatGPT. When Cursor makes an architecture decision, that decision shows up in my daily briefing the next morning. One memory, everywhere.

The extraction pipeline handles the rest. Every piece of text I save gets processed — entities extracted, commitments identified, events detected, claims mapped. When two claims contradict each other (and they do — I'm human), the system flags the conflict and asks me to resolve it.

All of that happens automatically. I never tag anything. I never categorize. I just save and move on.

What Happened When I Said Hello

When I opened a new Claude session, three things happened before Claude even responded:

-

Preferences loaded — Claude already knows who I am, how I communicate, what tools I use, what I'm working on. This isn't Claude's built-in memory. It's my portable preference profile that syncs across every AI tool.

-

Daily digest pulled — An AI-generated briefing that synthesizes everything in my vault into priorities, overdue items, upcoming deadlines, and insights. Updated daily.

-

Conflicts checked — Seventeen contradictions across my notes, ranked by urgency. The top one: my own positioning of Woxpas. Is it a memory product or a coherence layer for multi-agent trust? (Turns out it's both — memory at the core, coherence as an emergent property. But that's a different post.)

Claude didn't need me to explain what I'm working on. It didn't need context. It just read the vault and gave me my day.

The Part That Gets Interesting

After looking at the briefing, I realized something. If Claude can read my vault and tell me what to work on — so can an autonomous agent.

Imagine connecting this to OpenClaw. The agent wakes up, reads the digest, sees that the demo is due today. It has scoped access to the right project files. It runs the demo, logs a session summary back to the vault — what it did, what it found, any blockers. If it hits a decision that needs my input, it prepares the action and waits for my approval.

Tomorrow morning, I say hello again. The digest includes what the agent accomplished overnight. I can query why it made specific choices. I can see if its decisions conflict with anything else in my vault. Full audit trail, no extra work.

The memory is the foundation. The agents are just hands.

Why This Matters

Every AI tool you use today starts from zero. ChatGPT doesn't know what Claude decided yesterday. Cursor doesn't remember what you told ChatGPT last week. You are the integration layer — re-explaining context, re-stating preferences, re-describing your projects across every tool, every session.

That's the problem Woxpas solves. Not with another chatbot. Not with another notes app. With a sovereign memory layer that belongs to you, works everywhere, and gets smarter the more you use it.

I said hello and got my whole day back. That's what portable AI memory feels like.